Dealing with massive datasets presents unique challenges, particularly when it comes to efficiently retrieving specific ranges of data. B-trees, renowned for their logarithmic search time complexity, offer a powerful solution for indexing and querying large key ranges. However, as the scale of data grows to unimaginable proportions, such as 123B, conventional B-tree implementations can struggle to maintain their performance guarantees. To address this issue, researchers have explored innovative techniques to optimize B-tree successor queries for datasets of this magnitude.

- Experts have developed novel algorithms and data structures that leverage the inherent properties of B-trees to efficiently locate successors within vast key ranges.

- These advancements often involve incorporating techniques such as preprocessing to reduce the number of disk accesses required during successor search operations.

Additionally, these developments aim to minimize the time complexity associated with successor queries, ensuring that even for extremely large datasets, retrieval remains efficient and scalable.

A Fresh Benchmark for LLMs

The 123B Dataset is a enormous set of textual data that has emerged as a top evaluation tool for assessing the performance of large language architectures. This extensive dataset, with its rich content, pushes LLMs to their boundaries, allowing researchers and developers 123b to measure the evolution of these powerful AI systems.

The Dataset B-123 has become instrumental in the field of natural language processing, spurring innovation and developing our understanding of how LLMs can be efficiently utilized to a broad range of tasks.

Scaling 123B Parameter Models on Commodity Hardware

Training large language models (LLMs) with billions of parameters requires substantial computational resources. While high-performance computing clusters are often employed for this task, running such massive models on commodity hardware presents a compelling alternative. This approach has the potential to simplify access to powerful AI capabilities, enabling researchers and developers to innovate with LLMs without relying on expensive infrastructure. To achieve this goal, innovative techniques are needed to optimize model architectures and training procedures for efficient execution on common hardware.

- Researchers have made significant progress in developing techniques that can effectively scale LLMs on commodity hardware. These advancements include parameter pruning, which reduce the number of parameters required for adequate performance.

- Furthermore, GPUs are increasingly being integrated into commodity devices, providing a boost to computational capabilities. This trend is making it possible to train and deploy larger models on a wider range of hardware platforms.

The ongoing research in this field holds promise for advancing the accessibility and impact of large language models. By making LLMs more widely available, we can promote innovation across diverse domains, from education to healthcare to scientific discovery.

Efficient Training of Massive Parameter Neural Networks

Training neural networks with a vast number of parameters, such as the considerable 123 billion parameter models, presents significant challenges. These large-scale systems demand substantial computational resources and time for effective training.

To address these obstacles, researchers have developed cutting-edge training techniques aimed at improving speed. Within these methods are techniques such as parameter efficient training, backpropagation acceleration, and distributed training across multiple machines.

These advancements enable the harnessing of larger models, unlocking their potential for solving complex tasks in areas such as natural language processing, computer vision, and scientific discovery.

Exploring the Capabilities of a 123B Parameter Transformer

A 123B parameter transformer stands as a monumental achievement in the field of artificial intelligence. Delving into its vast architecture reveals a myriad of capabilities, pushing the boundaries of what's possible. From generating human-quality text to performing complex calculations, this model showcases the transformative power of deep learning.

- Experts are eagerly exploring its applications in a broad range of fields, including machine translation.

- The potential of such a powerful tool are vast, offering profound opportunities to revolutionize the way we engage with technology.

Nonetheless, it's essential to evaluate its development and deployment with caution. Addressing ethical concerns and ensuring fairness are crucial steps in utilizing the power of this technology for the benefit of humanity.

Fine-tuning 123B for Code Synthesis and Interpretation

The massive language model 123B possesses remarkable potential in the realm of code. Through fine-tuning, this powerful model can be enabled to effectively generate code across diverse programming languages. Furthermore, 123B's capabilities extend to understanding and decoding existing code, aiding developers in identifying issues and enhancing code quality. This combination of code generation and understanding makes 123B a valuable asset for modern software development.

Ross Bagley Then & Now!

Ross Bagley Then & Now! Hailie Jade Scott Mathers Then & Now!

Hailie Jade Scott Mathers Then & Now! Nancy McKeon Then & Now!

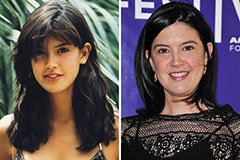

Nancy McKeon Then & Now! Phoebe Cates Then & Now!

Phoebe Cates Then & Now! Andrew McCarthy Then & Now!

Andrew McCarthy Then & Now!